Is Google Building an “Internet of Places?”

Editor’s note: Street Fight Lead Analyst Mike Boland contributed a chapter to Charlie Fink’s Book, Convergence, How the World Will Be Painted with Data. We’ve excerpted part of that chapter below with permission from the publisher, and you can see more about the book here.

Google’s core business is search. AR is going to need visual search, a clickable search of the real world as seen through the digital camera. Point your phone at an item to get informational overlays about it.

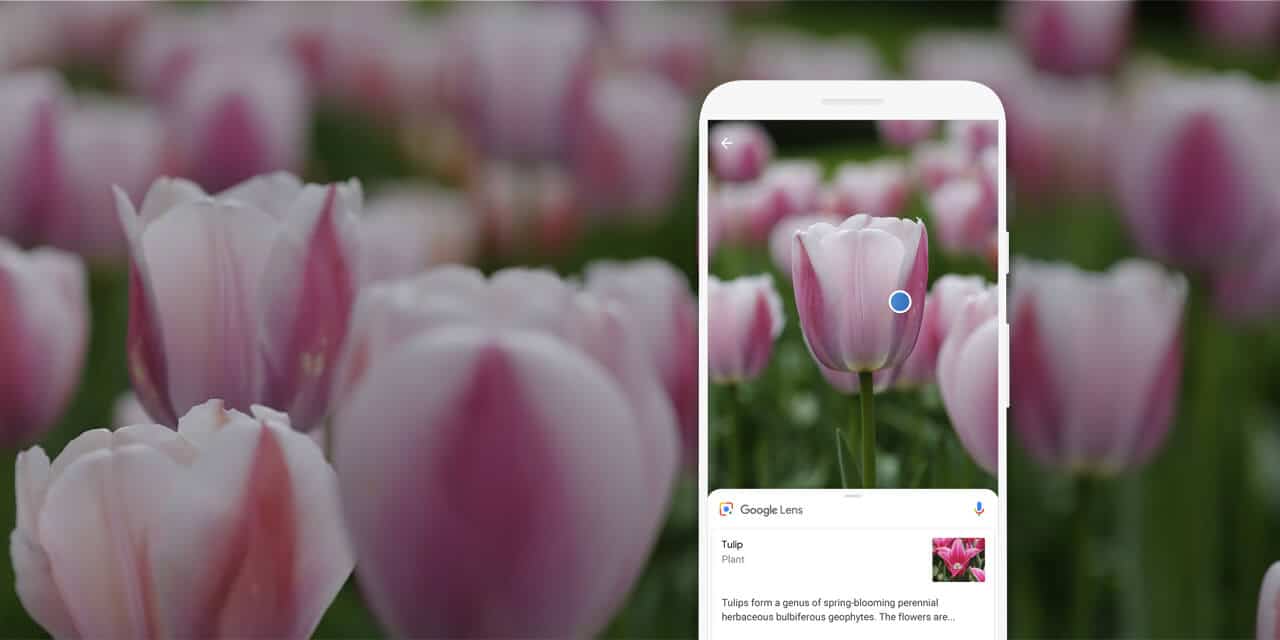

The current manifestation of this use of computer vision is Google Lens. Utilizing the camera, it returns informational overlays on items at which you point your phone. But instead of “10 blue links” as results, it returns one single answer—a paradigm Google has been cultivating for years with the Knowledge Graph and Google Assistant.

“The camera is not just answering questions, but putting the answers right where the questions are,” said Google’s Aparna Chennapragada at May 2018’s Google I/O.

Use cases will materialize over time, but it’s already clear that visual search can carry lots of commercial intent. Point your phone at a store or restaurant to get business details. Point your phone at a pair of shoes on the street to find out prices, reviews, and purchase info.

This proximity between the searcher and the subject indicates high intent, which means higher conversions and more money for Google. Moreover, visual search has the magic combination of frequency and utility, which could make it the first scalable AR use case: making the real world clickable.

Last Mile

In addition to Google Lens, visual search will take shape in Google’s visual positioning service (VPS). A sort of love child of Google Lens and Google Maps’ Street View, it will let users hold up their phones to see 3D navigational overlays on streets and in stores. For consumers, this has incredible value and appears to be free. For Google, this search of the physical world helps protect its core search business.

Ninety-two percent of the $3.7 billion in U.S. retail commerce is spent in physical stores. Mobile interaction increasingly influences that spending to the tune of $1 trillion in consumer spending per year. This is where AR could take the biggest bite, and Google knows it.

“Think of the things that are core to Google-like search and maps,” said Google’s Aaron Luber at ARiA @MIT Media Lab, in January 2018. “All the ways we monetize the Internet today will be ways that we think about monetizing with AR in the future.”

Index the World

The analogy for visual search is that the camera is the search box. Physical items are search terms, and informational overlays are results. So that raises the question, what’s the search index? Just as Google indexes the web with a relevance algorithm, what’s the physical-world equivalent?

The answer is the “Internet of Places,” another term for the AR Cloud. Google will get started with existing assets like its vast image database for AR object recognition. Street View Imagery will power storefront identity in Google Lens, while its Maps API will arm AR apps with real-world geometry.

Similarly, Google’s gravitational pull allows it to get its hands on valuable data sets, such as 3D maps of building interiors like Lowes Hardware. And its Waymo autonomous vehicle (AV) division will generate even denser point clouds for AR, given intensive 3D mapping needs of AVs.

This physical-world indexing strategy will continue to develop and hold priority for Google. The world will be painted with data just like the web is a massive corpus of data, the organization of which has created trillions in value. Google, the incumbent Internet organizer, needs to cover the physical world itself if it is to own the search of the future.

See more about the book here.