Visual Search and Local: A Match Made in Mountain View

This post is the latest in our “Visualizing Local” series. It’s our editorial focus for the month of May, including topics like artificial intelligence and marketing automation. See the rest of the series here.

One of our top picks for potential augmented reality killer apps is visual search. Its utility and frequency mirror that of search and hit several marks for potential killer-app status. It’s also monetizable, it’s a natural fit for local discovery, and Google is highly motivated to make it happen.

But first let’s step back and explain what we mean by “visual search,” as it can take a number of forms, such as Google image search. Conversely, what we’re talking about here is pointing your phone at an item to get info about it, à la Google Lens. It’s just one of many flavors of AR that are developing.

Google is keen on visual search as a corollary to its core search business. This includes building an “Internet of Places,” which will be accessible through a visual front end like Google Lens. This works toward boosting Google search volume by creating additional inputs and modes, just like voice search.

“One way we think about Lens is we’re indexing the physical world — billions of places and products … much like search indexes the billions of pages on the web,”said Google’s Aparna Chennapragada at last week’s I/O event. “Sometimes the things you’re interested in are difficult to describe in a search box.”

New Lens features announced last week include real-time language translations by pointing your phone at signage (think public transit). There’s also the ability to calculate restaurant tips — sort of like Snap’s new feature — and getting more information on restaurant menu items (see above).

This creates a nice local search and discovery use case for Google Lens. It already recognized storefronts, where Google uses Street View imagery for object recognition. But now the experience gets more granular in searching for specific menu items using Google My Business data.

“To pull this off, Lens first has to identify all the dishes on the menu, looking for things like the font, style, size, and color to differentiate dishes from descriptions,” said Chennapragada. “Next, it matches the dish names with relevant photos and reviews for that restaurant in Google Maps.”

Other use cases like language translation likewise tap into Google assets and knowledge graph: “What you’re seeing here is text-to-speech, computer vision, the power of translate, and 20 years of language understanding from search, all coming together,” said Chennapragada.

These existing assets — along with its motivation make visual search happen — make Google a clear front runner in the race to deploy visual search. We’re seeing others such as Pinterest make a logical play in the same arena. Snapchat is also joining the race through a partnership with Amazon to identify products in the real world.

Though these visual search challengers could shine in niche use cases such as fashion items, Google will rule as the best all-around utility for visual search. It has the deepest tech stack, and the substance (knowledge graph) to be useful beyond just a flashy novelty for identifying things visually.

The name of the game now is to get users to adopt it. Google Lens won’t be a silver bullet and will shine in a few areas where Google is directing users, such as pets and flowers. But it will really shine in product search, which happens to be where monetization will eventually come into the picture.

But the first step is to accelerate adoption, and Google has shown that this is a priority. Over the past year, it’s taken several steps to place Google Lens front and center in users’ search experiences so they can find it. It wants to acclimate users to this new way to search and develop the habit.

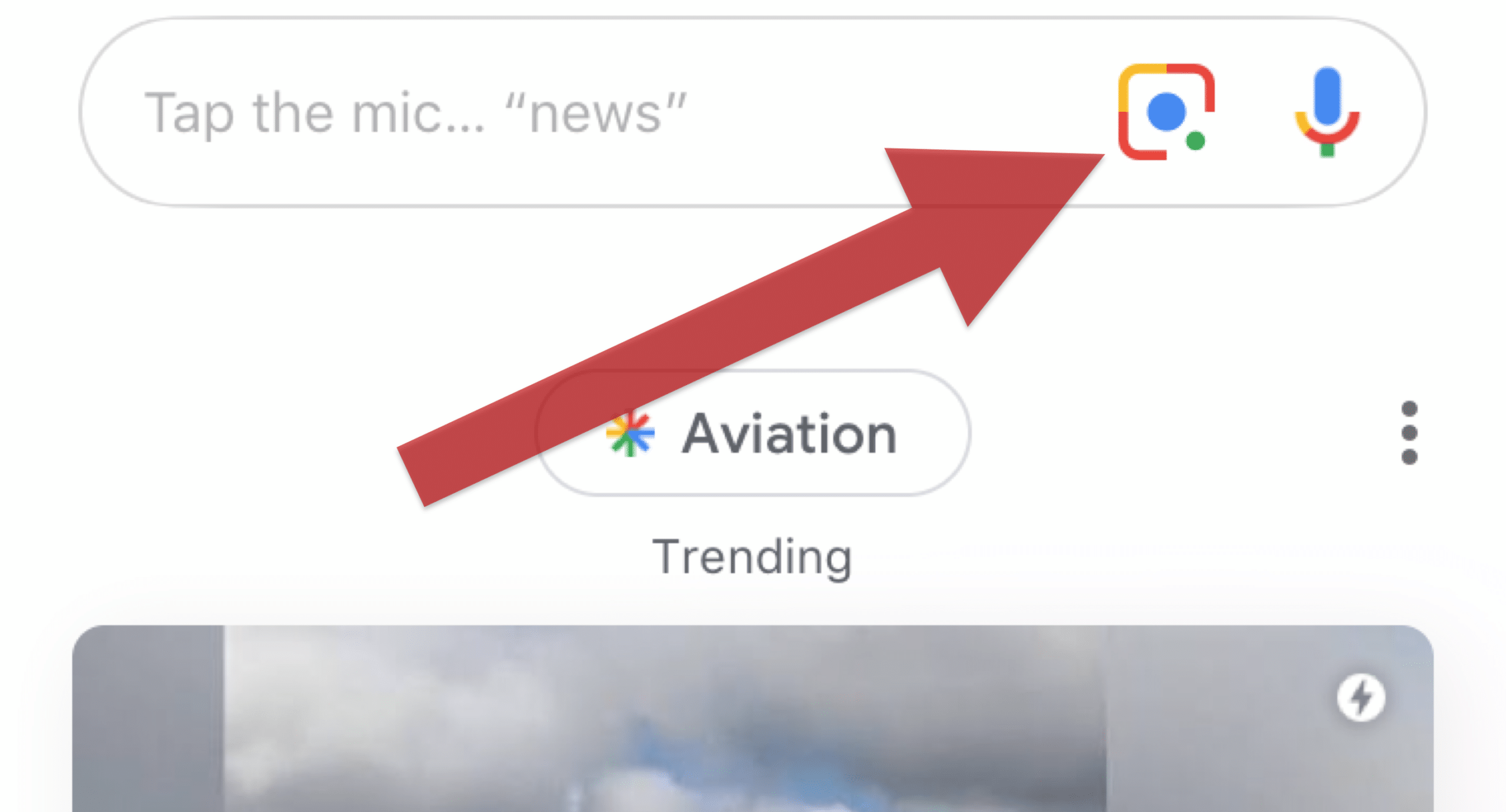

This includes last fall’s addition of a Google Lens button right within the iOS Google app. A small Lens icon now joins the voice search icon at the right side of the search bar (see above). And last week’s I/O event included several moves to “incubate” Google Lens in search so people can find it easier.

“We’re excited to bring the camera to search, adding a new dimension to your search results,” said Google’s Aparna Chennapragada on stage. “With computer vision and AR, the camera in our hands is turning into a powerful visual tool to help you understand the world around you.”

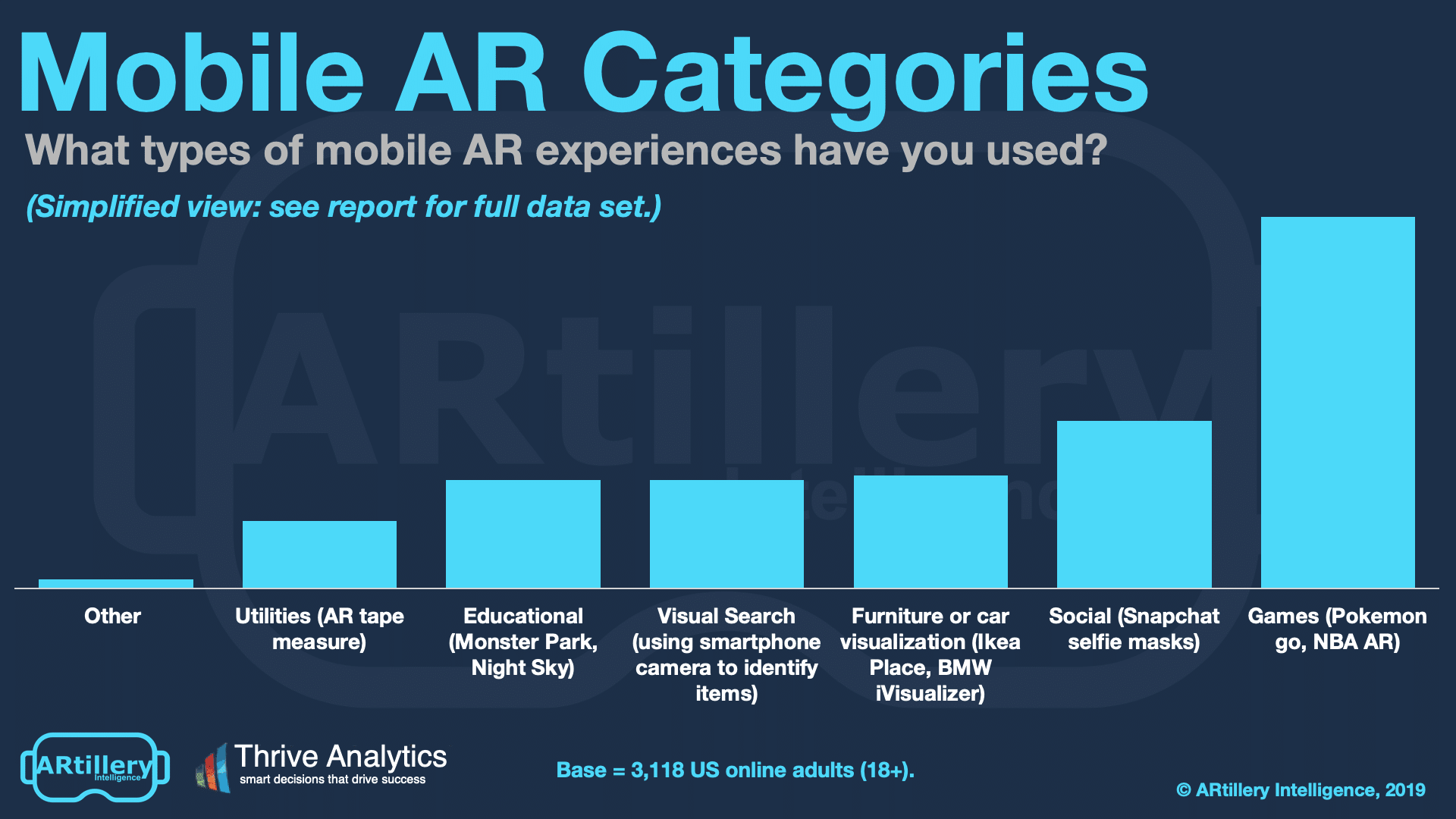

Google’s move to make visual search a thing already appears to be working. According to consumer survey data from ARtillery Intelligence (disclosure: a firm with which I’m affiliated) and Thrive Analytics, 24% of AR users already engage visual search. We believe this will grow as Google pushes Lens as a common use case in the next era of search.

Panning back, AR aligns with other initiatives already underway at Google like voice search and the knowledge graph. These are part of a longstanding smartphone-era trend to bring Google from the “10 blue links” paradigm to answering questions and solving problems directly, as in the knowledge panel.

“It all begins with our mission to organize the world’s information and make it universally accessible and useful,” said Google CEO Sundar Pichai about visual search during the I/O keynote. “Today, our mission feels as relevant as ever. But the way we approach it is constantly evolving. We’re moving from a company that helps you find answers to a company that helps you get things done.”