How AR Will Fundamentally Change Search, Participating in an ‘Internet of Places’

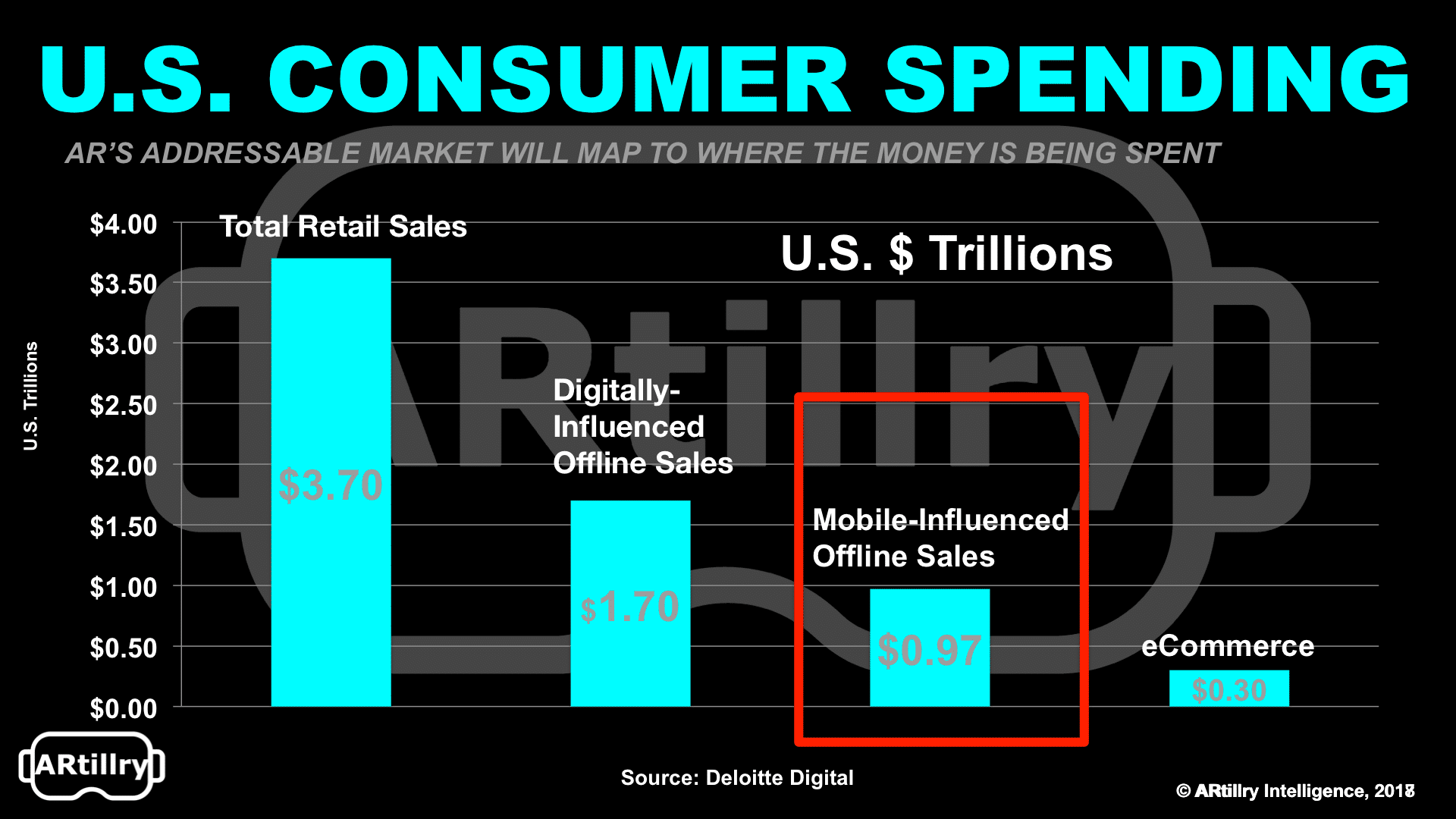

About $3.7 trillion is spent annually in consumer purchases in the U.S, according to Deloitte. But it’s often forgotten that only $300 billion of that (8%) is spent on e-commerce. This means that offline brick-and-mortar spending—though often overshadowed by its sexier online counterpart—is where the true scale occurs.

But digital media, like mobile search, still makes a big impact. Though spending happens predominantly offline, it’s increasingly influenced online. Specifically, $1.7 trillion (46% of that $3.7 billion) is driven through online and mobile media. This is what many of us in local call online-to-offline (O2O) commerce.

O2O is one area where AR will find a home. Just think: Is there any better technology to unlock O2O commerce than one that literally melds physical and digital worlds? AR can shorten gaps in time and space that currently separate those interactions (e.g. search) from offline outcomes.

We’re talking contextual information on items you point your phone at. AR overlays could help you decide where to eat, which television to buy, and where to buy the shoes you see worn on the street. This is what we’ve examined in the past as “Local AR,” and it will take many forms.

Visual Search

One of the first formats where Local AR will manifest is visual search. If you think about it, AR in some ways is a form of search. But instead of typing or tapping search queries in the traditional way, the search input is your phone’s camera, and the search “terms” are physical objects.

This analogy applies to many forms of search but is particularly fitting to local. Traditional (typed) local search performs best when consumers are out of home, using their smartphones. This is when “buying intent” is highest and when click-through-rates and other metrics are highest.

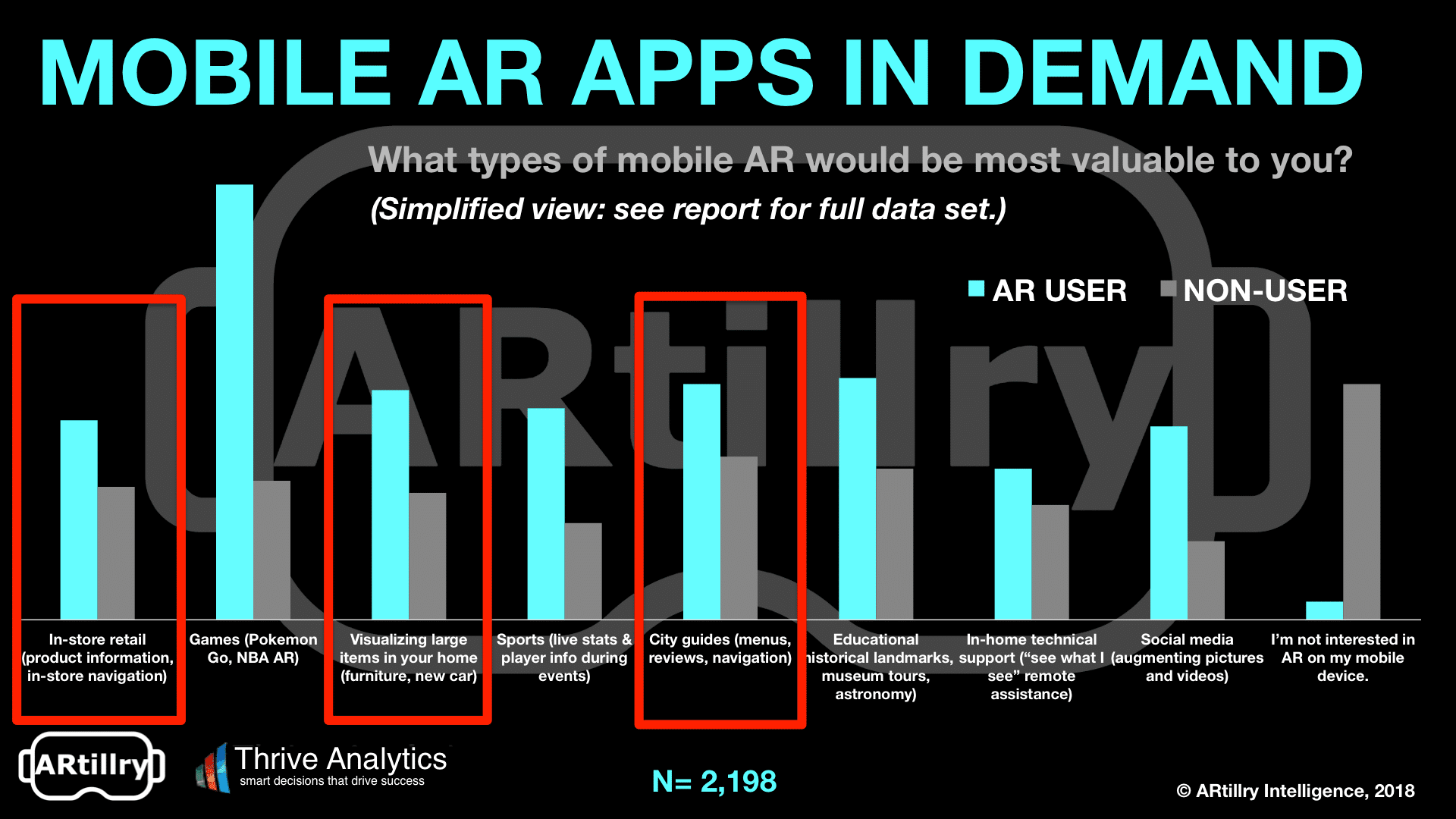

Furthermore, proximity-based visual searches through an AR interface could gain traction if recent AR consumer survey research is any indication. Among the categories and types of AR apps that consumers want, city guides, in-store retail, and commerce apps showed strong demand.

These proximity-based searches are conducive to AR because the phone is near the subject (think: a restaurant you walk by), and can therefore derive information and context after mapping it visually. This really just makes it an evolution of a search query—but done with the camera.

“A lot of the future of search is going to be about pictures instead of keywords,” Pinterest CEO Ben Silberman said recently. His claim triangulates several trends: millennials’ heavy camera use, mobile hardware evolution, and AR software (such as ARkit) that further empowers that hardware.

The Internet of Places

These are some reasons why Google is keen on AR. As is common to its XR initiatives, Google’s AR efforts are driven to advance its core business. In other words, to continue dominating and deriving revenue from search, Google must establish a place in this next visual iteration of the medium.

“Think of the things that are core to Google, like search and maps,” said Google XR Partnership Lead Aaron Luber at the ARiA conference. “These are core things we are monetizing today and see added ways we can use [AR to bolster]. All the ways we monetize today will be ways that we think about monetizing with AR.”

For example, a key search metric is query volume (along with cost-per-click, click-through-rate, and fill rates). Visual search lets Google capture more “queries” when consumers want info. And these out-of-home moments, again, are “high intent” when monetization potential is greatest.

These aspirations will manifest initially in Google Lens. Using Google’s vast image database and knowledge graph, Lens will identify and provide information about objects at which you point your phone. For example, point your phone at a store or restaurant to get business details overlaid graphically.

This can all be thought of as an extension to Google’s mission statement to “organize the world’s information.” But instead of a search index and typed queries, local AR delivers information “in situ” (where an item is). And instead of a web index, this works towards an “Internet of places.”

But before we get too carried away in blue-sky visions—as is often done in XR industry rhetoric, trade shows, and YouTube clips—it’s important to acknowledge realistic challenges. There are several interlocking pieces including hardware, software, and most importantly the AR Cloud.

We’ll pick the discussion up there in the next Road Map column with a deeper dive on the AR Cloud. What is it, and who will build it?

For more on this topic, see Street Fight’s recent report on Local AR and the transformation that visually immersive technologies could bring to local search and discovery.

Mike Boland is Street Fight’s lead analyst, author of the Road Map column and producer of the Heard on the Street podcast. He covers AR & VR as chief analyst of ARtillry Intelligence, and SF President of the VR/AR Association. He has been an analyst in the local space since 2005, covering mobile, social and emerging tech.